11.1: Correlation

11.1.1: An Intuitive Approach to Relationships

Correlation refers to any of a broad class of statistical relationships involving dependence.

Learning Objective

Recognize the fundamental meanings of correlation and dependence.

Key Points

- Dependence refers to any statistical relationship between two random variables or two sets of data.

- Correlations are useful because they can indicate a predictive relationship that can be exploited in practice.

- Formally, dependence refers to any situation in which random variables do not satisfy a mathematical condition of probabilistic independence.

- In loose usage, correlation can refer to any departure of two or more random variables from independence, but technically it refers to any of several more specialized types of relationship between mean values.

Key Term

- correlation

-

One of the several measures of the linear statistical relationship between two random variables, indicating both the strength and direction of the relationship.

Researchers often want to know how two or more variables are related. For example, is there a relationship between the grade on the second math exam a student takes and the grade on the final exam? If there is a relationship, what is it and how strong is it? As another example, your income may be determined by your education and your profession. The amount you pay a repair person for labor is often determined by an initial amount plus an hourly fee. These are all examples of a statistical factor known as correlation. Note that the type of data described in these examples is bivariate (“bi” for two variables). In reality, statisticians use multivariate data, meaning many variables. As in our previous example, your income may be determined by your education, profession, years of experience or ability.

Correlation and Dependence

Dependence refers to any statistical relationship between two random variables or two sets of data. Correlation refers to any of a broad class of statistical relationships involving dependence. Familiar examples of dependent phenomena include the correlation between the physical statures of parents and their offspring and the correlation between the demand for a product and its price. Correlations are useful because they can indicate a predictive relationship that can be exploited in practice.

For example, an electrical utility may produce less power on a mild day based on the correlation between electricity demand and weather. In this example, there is a causal relationship, because extreme weather causes people to use more electricity for heating or cooling; however, statistical dependence is not sufficient to demonstrate the presence of such a causal relationship (i.e., correlation does not imply causation).

Formally, dependence refers to any situation in which random variables do not satisfy a mathematical condition of probabilistic independence. In loose usage, correlation can refer to any departure of two or more random variables from independence, but technically it refers to any of several more specialized types of relationship between mean values.

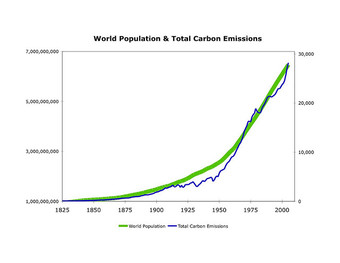

Correlation

This graph shows a positive correlation between world population and total carbon emissions.

11.1.2: Scatter Diagram

A scatter diagram is a type of mathematical diagram using Cartesian coordinates to display values for two variables in a set of data.

Learning Objective

Demonstrate the role that scatter diagrams play in revealing correlation.

Key Points

- The controlled parameter, or independent variable, is customarily plotted along the horizontal axis, while the measured or dependent variable is customarily plotted along the vertical axis.

- If no dependent variable exists, either type of variable can be plotted on either axis, and a scatter plot will illustrate only the degree of correlation between two variables.

- A scatter plot shows the direction and strength of a relationship between the variables.

- You can determine the strength of the relationship by looking at the scatter plot and seeing how close the points are to a line.

- When you look at a scatterplot, you want to notice the overall pattern and any deviations from the pattern.

Key Terms

- Cartesian coordinate

-

The coordinates of a point measured from an origin along a horizontal axis from left to right (the

$x$ -axis) and along a vertical axis from bottom to top (the$y$ -axis). - trend line

-

A line on a graph, drawn through points that vary widely, that shows the general trend of a real-world function (often generated using linear regression).

Example

- To display values for “lung capacity” (first variable) and how long that person could hold his breath, a researcher would choose a group of people to study, then measure each one’s lung capacity (first variable) and how long that person could hold his breath (second variable). The researcher would then plot the data in a scatter plot, assigning “lung capacity” to the horizontal axis, and “time holding breath” to the vertical axis. A person with a lung capacity of 400 ml who held his breath for 21.7 seconds would be represented by a single dot on the scatter plot at the point (400, 21.7) in the Cartesian coordinates. The scatter plot of all the people in the study would enable the researcher to obtain a visual comparison of the two variables in the data set, and will help to determine what kind of relationship there might be between the two variables.

A scatter plot, or diagram, is a type of mathematical diagram using Cartesian coordinates to display values for two variables in a set of data. The data is displayed as a collection of points, each having the value of one variable determining the position on the horizontal axis, and the value of the other variable determining the position on the vertical axis.

In the case of an experiment, a scatter plot is used when a variable exists that is below the control of the experimenter. The controlled parameter (or independent variable) is customarily plotted along the horizontal axis, while the measured (or dependent variable) is customarily plotted along the vertical axis. If no dependent variable exists, either type of variable can be plotted on either axis, and a scatter plot will illustrate only the degree of correlation (not causation) between two variables. This is the context in which we view scatter diagrams.

Relevance to Correlation

A scatter plot shows the direction and strength of a relationship between the variables. A clear direction happens given one of the following:

- High values of one variable occurring with high values of the other variable or low values of one variable occurring with low values of the other variable.

- High values of one variable occurring with low values of the other variable.

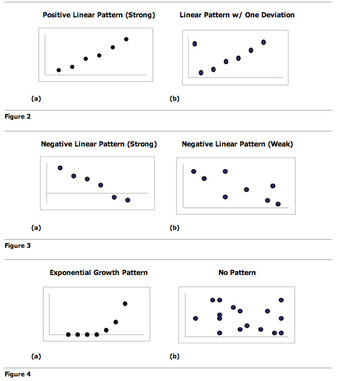

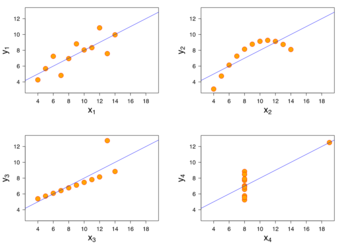

You can determine the strength of the relationship by looking at the scatter plot and seeing how close the points are to a line, a power function, an exponential function, or to some other type of function. When you look at a scatterplot, you want to notice the overall pattern and any deviations from the pattern. The following scatterplot examples illustrate these concepts .

Scatter Plot Patterns

An illustration of the various patterns that scatter plots can visualize.

Trend Lines

To study the correlation between the variables, one can draw a line of best fit (known as a “trend line”). An equation for the correlation between the variables can be determined by established best-fit procedures. For a linear correlation, the best-fit procedure is known as linear regression and is guaranteed to generate a correct solution in a finite time. No universal best-fit procedure is guaranteed to generate a correct solution for arbitrary relationships.

Other Uses of Scatter Plots

A scatter plot is also useful to show how two comparable data sets agree with each other. In this case, an identity line (i.e., a

line or

line) is often drawn as a reference. The more the two data sets agree, the more the scatters tend to concentrate in the vicinity of the identity line. If the two data sets are numerically identical, the scatters fall on the identity line exactly.

One of the most powerful aspects of a scatter plot, however, is its ability to show nonlinear relationships between variables. Furthermore, if the data is represented by a mixed model of simple relationships, these relationships will be visually evident as superimposed patterns.

11.1.3: Coefficient of Correlation

The correlation coefficient is a measure of the linear dependence between two variables

and

, giving a value between

and

.

Learning Objective

Compute Pearson’s product-moment correlation coefficient.

Key Points

- The correlation coefficient was developed by Karl Pearson from a related idea introduced by Francis Galton in the 1880s.

- Pearson’s correlation coefficient between two variables is defined as the covariance of the two variables divided by the product of their standard deviations.

- Pearson’s correlation coefficient when applied to a sample is commonly represented by the letter

. - The size of the correlation

indicates the strength of the linear relationship between

and

. - Values of

close to

or to

indicate a stronger linear relationship between

and

.

Key Terms

- covariance

-

A measure of how much two random variables change together.

- correlation

-

One of the several measures of the linear statistical relationship between two random variables, indicating both the strength and direction of the relationship.

The most common coefficient of correlation is known as the Pearson product-moment correlation coefficient, or Pearson’s

. It is a measure of the linear correlation (dependence) between two variables

and

, giving a value between

and

. It is widely used in the sciences as a measure of the strength of linear dependence between two variables. It was developed by Karl Pearson from a related idea introduced by Francis Galton in the 1880s.

Pearson’s correlation coefficient between two variables is defined as the covariance of the two variables divided by the product of their standard deviations. The form of the definition involves a “product moment”, that is, the mean (the first moment about the origin) of the product of the mean-adjusted random variables; hence the modifier product-moment in the name.

Pearson’s correlation coefficient when applied to a population is commonly represented by the Greek letter

(rho) and may be referred to as the population correlation coefficient or the population Pearson correlation coefficient.

Pearson’s correlation coefficient when applied to a sample is commonly represented by the letter

and may be referred to as the sample correlation coefficient or the sample Pearson correlation coefficient. The formula for

is as follows:

An equivalent expression gives the correlation coefficient as the mean of the products of the standard scores. Based on a sample of paired data

, the sample Pearson correlation coefficient is shown in:

Mathematical Properties

- The value of

is always between

and

:

. - The size of the correlation

indicates the strength of the linear relationship between

and

. Values of

close to

or

indicate a stronger linear relationship between

and

. - If

there is absolutely no linear relationship between

and

(no linear correlation). - A positive value of

means that when

increases,

tends to increase and when

decreases,

tends to decrease (positive correlation). - A negative value of

means that when

increases,

tends to decrease and when

decreases,

tends to increase (negative correlation). - If

, there is perfect positive correlation. If

, there is perfect negative correlation. In both these cases, all of the original data points lie on a straight line. Of course, in the real world, this will not generally happen. - The Pearson correlation coefficient is symmetric.

Another key mathematical property of the Pearson correlation coefficient is that it is invariant to separate changes in location and scale in the two variables. That is, we may transform

to

and transform

to

, where

,

,

, and

are constants, without changing the correlation coefficient. This fact holds for both the population and sample Pearson correlation coefficients.

Example

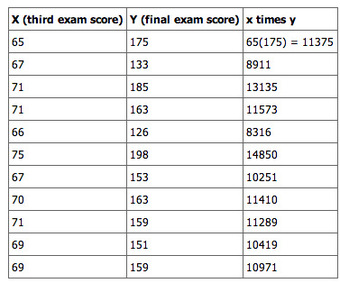

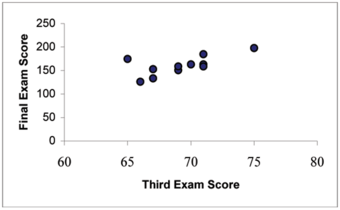

Consider the following example data set of scores on a third exam and scores on a final exam:

Example

This table shows an example data set of scores on a third exam and scores on a final exam.

To find the correlation of this data we need the summary statistics; means, standard deviations, sample size, and the sum of the product of

and

.

To find (

), multiply the

and

in each ordered pair together then sum these products. For this problem,

. To find the correlation coefficient we need the mean of

, the mean of

, the standard deviation of

and the standard deviation of

.

Put the summary statistics into the correlation coefficient formula and solve for

, the correlation coefficient.

11.1.4: Coefficient of Determination

The coefficient of determination provides a measure of how well observed outcomes are replicated by a model.

Learning Objective

Interpret the properties of the coefficient of determination in regard to correlation.

Key Points

- The coefficient of determination,

, is a statistic whose main purpose is either the prediction of future outcomes or the testing of hypotheses on the basis of other related information. - The most general definition of the coefficient of determination is illustrated in, where

is the residual sum of squares and

is the total sum of squares. -

, when expressed as a percent, represents the percent of variation in the dependent variable y that can be explained by variation in the independent variable

using the regression (best fit) line. -

when expressed as a percent, represents the percent of variation in

that is NOT explained by variation in

using the regression line. This can be seen as the scattering of the observed data points about the regression line.

Key Terms

- regression

-

An analytic method to measure the association of one or more independent variables with a dependent variable.

- correlation coefficient

-

Any of the several measures indicating the strength and direction of a linear relationship between two random variables.

The coefficient of determination (denoted

) is a statistic used in the context of statistical models. Its main purpose is either the prediction of future outcomes or the testing of hypotheses on the basis of other related information. It provides a measure of how well observed outcomes are replicated by the model, as the proportion of total variation of outcomes explained by the model. Values for

can be calculated for any type of predictive model, which need not have a statistical basis.

The Math

A data set will have observed values and modelled values, sometimes known as predicted values. The “variability” of the data set is measured through different sums of squares, such as:

- the total sum of squares (proportional to the sample variance);

- the regression sum of squares (also called the explained sum of squares); and

- the sum of squares of residuals, also called the residual sum of squares.

The most general definition of the coefficient of determination is:

where

is the residual sum of squares and

is the total sum of squares.

Properties and Interpretation of

The coefficient of determination is actually the square of the correlation coefficient. It is is usually stated as a percent, rather than in decimal form. In context of data,

can be interpreted as follows:

-

, when expressed as a percent, represents the percent of variation in the dependent variable

that can be explained by variation in the independent variable

using the regression (best fit) line. -

when expressed as a percent, represents the percent of variation in

that is NOT explained by variation in

using the regression line. This can be seen as the scattering of the observed data points about the regression line.

So

is a statistic that will give some information about the goodness of fit of a model. In regression, the

coefficient of determination is a statistical measure of how well the regression line approximates the real data points. An

of 1 indicates that the regression line perfectly fits the data.

In many (but not all) instances where

is used, the predictors are calculated by ordinary least-squares regression: that is, by minimizing

. In this case,

increases as we increase the number of variables in the model. This illustrates a drawback to one possible use of

, where one might keep adding variables to increase the

value. For example, if one is trying to predict the sales of a car model from the car’s gas mileage, price, and engine power, one can include such irrelevant factors as the first letter of the model’s name or the height of the lead engineer designing the car because the

will never decrease as variables are added and will probably experience an increase due to chance alone. This leads to the alternative approach of looking at the adjusted

. The explanation of this statistic is almost the same as

but it penalizes the statistic as extra variables are included in the model.

Note that

does not indicate whether:

- the independent variables are a cause of the changes in the dependent variable;

- omitted-variable bias exists;

- the correct regression was used;

- the most appropriate set of independent variables has been chosen;

- there is collinearity present in the data on the explanatory variables; or

- the model might be improved by using transformed versions of the existing set of independent variables.

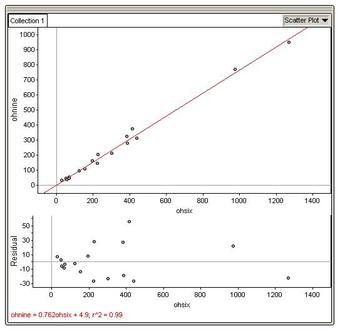

Example

Consider the third exam/final exam example introduced in the previous section. The correlation coefficient is

. Therefore, the coefficient of determination is

.

The interpretation of

in the context of this example is as follows. Approximately 44% of the variation (0.4397 is approximately 0.44) in the final exam grades can be explained by the variation in the grades on the third exam. Therefore approximately 56% of the variation (

) in the final exam grades can NOT be explained by the variation in the grades on the third exam.

11.1.5: Line of Best Fit

The trend line (line of best fit) is a line that can be drawn on a scatter diagram representing a trend in the data.

Learning Objective

Illustrate the method of drawing a trend line and what it represents.

Key Points

- A trend line could simply be drawn by eye through a set of data points, but more properly its position and slope are calculated using statistical techniques like linear regression.

- Trend lines are often used to argue that a particular action or event (such as training, or an advertising campaign) caused observed changes at a point in time.

- The mathematical process which determines the unique line of best fit is based on what is called the method of least squares.

- The line of best fit is drawn by (1) having the same number of data points on each side of the line – i.e., the line is in the median position; and (2) NOT going from the first data to the last – since extreme data often deviate from the general trend and this will give a biased sense of direction.

Key Term

- trend

-

the long-term movement in time series data after other components have been accounted for

The trend line, or line of best fit, is a line that can be drawn on a scatter diagram representing a trend in the data. It tells whether a particular data set has increased or decreased over a period of time. A trend line could simply be drawn by eye through a set of data points, but more properly its position and slope are calculated using statistical techniques like linear regression. Trend lines typically are straight lines, although some variations use higher degree polynomials depending on the degree of curvature desired in the line.

Trend lines are often used to argue that a particular action or event (such as training, or an advertising campaign) caused observed changes at a point in time. This is a simple technique, and does not require a control group, experimental design, or a sophisticated analysis technique. However, it suffers from a lack of scientific validity in cases where other potential changes can affect the data.

The mathematical process which determines the unique line of best fit is based on what is called the method of least squares – which explains why this line is sometimes called the least squares line. This method works by:

- finding the difference of each data

value from the line; - squaring all the differences;

- summing all the squared differences;

- repeating this process for all positions of the line until the smallest sum of squared differences is reached.

Drawing a Trend Line

The line of best fit is drawn by:

- having the same number of data points on each side of the line – i.e., the line is in the median position;

- NOT going from the first data to the last data – since extreme data often deviate from the general trend and this will give a biased sense of direction.

The closeness (or otherwise) of the cloud of data points to the line suggests the concept of spread or dispersion.

The graph below shows what happens when we draw the line of best fit from the first data to the last data – it does not go through the median position as there is one data above and three data below the blue line. This is a common mistake to avoid.

Trend Line Mistake

This graph shows what happens when we draw the line of best fit from the first data to the last data.

To determine the equation for the line of best fit:

- draw the scatterplot on a grid and draw the line of best fit;

- select two points on the line which are, as near as possible, on grid intersections so that you can accurately estimate their position;

- calculate the gradient (

) of the line using the formula: - write the partial equation;

- substitute one of the chosen points into the partial equation to evaluate the “

” term; - write the full equation of the line.

Example

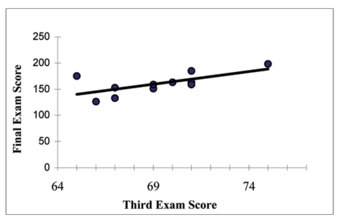

Consider the data in the graph below:

Example Graph

This graph will be used in our example for drawing a trend line.

To determine the equation for the line of best fit:

- a computer application has calculated and plotted the line of best fit for the data – it is shown as a black line – and it is in the median position with 3 data on one side and 3 data on the other side;

- the two points chosen on the line are

and

; - calculate the gradient (

) of the line using the formula:

- the part equation:

- substitute the point

into the equation:

- write the full equation of the line:

11.1.6: Other Types of Correlation Coefficients

Other types of correlation coefficients include intraclass correlation and the concordance correlation coefficient.

Learning Objective

Distinguish the intraclass and concordance correlation coefficients from previously discussed correlation coefficients.

Key Points

- The intraclass correlation is a descriptive statistic that can be used when quantitative measurements are made on units that are organized into groups.

- It describes how strongly units in the same group resemble each other.

- The concordance correlation coefficient measures the agreement between two variables (e.g., to evaluate reproducibility or for inter-rater reliability).

- Whereas Pearson’s correlation coefficient is immune to whether the biased or unbiased version for estimation of the variance is used, the concordance correlation coefficient is not.

Key Terms

- concordance

-

Agreement, accordance, or consonance.

- random effect model

-

A kind of hierarchical linear model assuming that the dataset being analyzed consists of a hierarchy of different populations whose differences relate to that hierarchy.

Intraclass Correlation

The intraclass correlation (or the intraclass correlation coefficient, abbreviated ICC) is a descriptive statistic that can be used when quantitative measurements are made on units that are organized into groups. It describes how strongly units in the same group resemble each other. While it is viewed as a type of correlation, unlike most other correlation measures it operates on data structured as groups rather than data structured as paired observations.

The intraclass correlation is commonly used to quantify the degree to which individuals with a fixed degree of relatedness (e.g., full siblings) resemble each other in terms of a quantitative trait. Another prominent application is the assessment of consistency or reproducibility of quantitative measurements made by different observers measuring the same quantity.

The intraclass correlation can be regarded within the framework of analysis of variance (ANOVA), and more recently it has been regarded in the framework of a random effect model. Most of the estimators can be defined in terms of the random effects model in:

where

is the

th observation in the

th group,

is an unobserved overall mean,

is an unobserved random effect shared by all values in group

, and

is an unobserved noise term. For the model to be identified, the

and

are assumed to have expected value zero and to be uncorrelated with each other. Also, the

are assumed to be identically distributed, and the

are assumed to be identically distributed. The variance of

is denoted

and the variance of

is denoted

. The population ICC in this framework is shown below:

Relationship to Pearson’s Correlation Coefficient

One key difference between the two statistics is that in the ICC, the data are centered and scaled using a pooled mean and standard deviation; whereas in the Pearson correlation, each variable is centered and scaled by its own mean and standard deviation. This pooled scaling for the ICC makes sense because all measurements are of the same quantity (albeit on units in different groups). For example, in a paired data set where each “pair” is a single measurement made for each of two units (e.g., weighing each twin in a pair of identical twins) rather than two different measurements for a single unit (e.g., measuring height and weight for each individual), the ICC is a more natural measure of association than Pearson’s correlation.

An important property of the Pearson correlation is that it is invariant to application of separate linear transformations to the two variables being compared. Thus, if we are correlating

and

, where, say,

, the Pearson correlation between

and

is 1: a perfect correlation. This property does not make sense for the ICC, since there is no basis for deciding which transformation is applied to each value in a group. However if all the data in all groups are subjected to the same linear transformation, the ICC does not change.

Concordance Correlation Coefficient

The concordance correlation coefficient measures the agreement between two variables (e.g., to evaluate reproducibility or for inter-rater reliability). The formula is written as:

where

and

are the means for the two variables and

and

are the corresponding variances.

Relation to Other Measures of Correlation

Whereas Pearson’s correlation coefficient is immune to whether the biased or unbiased version for estimation of the variance is used, the concordance correlation coefficient is not.

The concordance correlation coefficient is nearly identical to some of the measures called intraclass correlations. Comparisons of the concordance correlation coefficient with an “ordinary” intraclass correlation on different data sets will find only small differences between the two correlations.

11.1.7: Variation and Prediction Intervals

A prediction interval is an estimate of an interval in which future observations will fall with a certain probability given what has already been observed.

Learning Objective

Formulate a prediction interval and compare it to other types of statistical intervals.

Key Points

- A prediction interval bears the same relationship to a future observation that a frequentist confidence interval or Bayesian credible interval bears to an unobservable population parameter.

- In Bayesian terms, a prediction interval can be described as a credible interval for the variable itself, rather than for a parameter of the distribution thereof.

- The concept of prediction intervals need not be restricted to the inference of just a single future sample value but can be extended to more complicated cases.

- Since prediction intervals are only concerned with past and future observations, rather than unobservable population parameters, they are advocated as a better method than confidence intervals by some statisticians.

Key Terms

- confidence interval

-

A type of interval estimate of a population parameter used to indicate the reliability of an estimate.

- credible interval

-

An interval in the domain of a posterior probability distribution used for interval estimation.

In predictive inference, a prediction interval is an estimate of an interval in which future observations will fall, with a certain probability, given what has already been observed. A prediction interval bears the same relationship to a future observation that a frequentist confidence interval or Bayesian credible interval bears to an unobservable population parameter. Prediction intervals predict the distribution of individual future points, whereas confidence intervals and credible intervals of parameters predict the distribution of estimates of the true population mean or other quantity of interest that cannot be observed. Prediction intervals are also present in forecasts; however, some experts have shown that it is difficult to estimate the prediction intervals of forecasts that have contrary series. Prediction intervals are often used in regression analysis.

For example, let’s say one makes the parametric assumption that the underlying distribution is a normal distribution and has a sample set

. Then, confidence intervals and credible intervals may be used to estimate the population mean

and population standard deviation

of the underlying population, while prediction intervals may be used to estimate the value of the next sample variable,

.

Alternatively, in Bayesian terms, a prediction interval can be described as a credible interval for the variable itself, rather than for a parameter of the distribution thereof.

The concept of prediction intervals need not be restricted to the inference of just a single future sample value but can be extended to more complicated cases. For example, in the context of river flooding, where analyses are often based on annual values of the largest flow within the year, there may be interest in making inferences about the largest flood likely to be experienced within the next 50 years.

Since prediction intervals are only concerned with past and future observations, rather than unobservable population parameters, they are advocated as a better method than confidence intervals by some statisticians.

Prediction Intervals in the Normal Distribution

Given a sample from a normal distribution, whose parameters are unknown, it is possible to give prediction intervals in the frequentist sense — i.e., an interval

based on statistics of the sample such that on repeated experiments,

falls in the interval the desired percentage of the time.

A general technique of frequentist prediction intervals is to find and compute a pivotal quantity of the observables

– meaning a function of observables and parameters whose probability distribution does not depend on the parameters – that can be inverted to give a probability of the future observation

falling in some interval computed in terms of the observed values so far. The usual method of constructing pivotal quantities is to take the difference of two variables that depend on location, so that location cancels out, and then take the ratio of two variables that depend on scale, so that scale cancels out. The most familiar pivotal quantity is the Student’s

-statistic, which can be derived by this method.

A prediction interval

for a future observation

in a normal distribution

with known mean and variance may easily be calculated from the formula:

where:

the standard score of X, is standard normal distributed. The prediction interval is conventionally written as:

For example, to calculate the 95% prediction interval for a normal distribution with a mean (

) of 5 and a standard deviation (

) of 1, then

is approximately 2. Therefore, the lower limit of the prediction interval is approximately

, and the upper limit is approximately 7, thus giving a prediction interval of approximately 3 to 7.

Standard Score and Prediction Interval

Prediction interval (on the

-axis) given from

(the quantile of the standard score, on the

-axis). The

-axis is logarithmically compressed (but the values on it are not modified).

11.1.8: Rank Correlation

A rank correlation is a statistic used to measure the relationship between rankings of ordinal variables or different rankings of the same variable.

Learning Objective

Define rank correlation and illustrate how it differs from linear correlation.

Key Points

- A rank correlation coefficient measures the degree of similarity between two rankings and can be used to assess the significance of the relation between them.

- If one the variable decreases as the other increases, the rank correlation coefficients will be negative.

- An increasing rank correlation coefficient implies increasing agreement between rankings.

Key Terms

- Spearman’s rank correlation coefficient

-

A nonparametric measure of statistical dependence between two variables that assesses how well the relationship between two variables can be described using a monotonic function.

- rank correlation coefficient

-

A measure of the degree of similarity between two rankings that can be used to assess the significance of the relation between them.

- Kendall’s rank correlation coefficient

-

A statistic used to measure the association between two measured quantities; specifically, it measures the similarity of the orderings of the data when ranked by each of the quantities.

A rank correlation is any of several statistics that measure the relationship between rankings of different ordinal variables or different rankings of the same variable. In this context, a “ranking” is the assignment of the labels “first”, “second”, “third”, et cetera, to different observations of a particular variable. A rank correlation coefficient measures the degree of similarity between two rankings and can be used to assess the significance of the relation between them.

If, for example, one variable is the identity of a college basketball program and another variable is the identity of a college football program, one could test for a relationship between the poll rankings of the two types of program. One could then ask, do colleges with a higher-ranked basketball program tend to have a higher-ranked football program? A rank correlation coefficient can measure that relationship, and the measure of significance of the rank correlation coefficient can show whether the measured relationship is small enough to be likely to be a coincidence.

If there is only one variable—for example, the identity of a college football program—but it is subject to two different poll rankings (say, one by coaches and one by sportswriters), then the similarity of the two different polls’ rankings can be measured with a rank correlation coefficient.

Rank Correlation Coefficients

Rank correlation coefficients, such as Spearman’s rank correlation coefficient and Kendall’s rank correlation coefficient, measure the extent to which as one variable increases the other variable tends to increase, without requiring that increase to be represented by a linear relationship .

Spearman’s Rank Correlation

This graph shows a Spearman rank correlation of 1 and a Pearson correlation coefficient of 0.88. A Spearman correlation of 1 results when the two variables being compared are monotonically related, even if their relationship is not linear. In contrast, this does not give a perfect Pearson correlation.

If as the one variable increases the other decreases, the rank correlation coefficients will be negative. It is common to regard these rank correlation coefficients as alternatives to Pearson’s coefficient, used either to reduce the amount of calculation or to make the coefficient less sensitive to non-normality in distributions. However, this view has little mathematical basis, as rank correlation coefficients measure a different type of relationship than the Pearson product-moment correlation coefficient. They are best seen as measures of a different type of association rather than as alternative measure of the population correlation coefficient.

An increasing rank correlation coefficient implies increasing agreement between rankings. The coefficient is inside the interval

and assumes the value:

-

if the disagreement between the two rankings is perfect: one ranking is the reverse of the other; - 0 if the rankings are completely independent; or

- 1 if the agreement between the two rankings is perfect: the two rankings are the same.

Nature of Rank Correlation

To illustrate the nature of rank correlation, and its difference from linear correlation, consider the following four pairs of numbers

:

As we go from each pair to the next pair,

increases, and so does

. This relationship is perfect, in the sense that an increase in

is always accompanied by an increase in

. This means that we have a perfect rank correlation and both Spearman’s correlation coefficient and Kendall’s correlation coefficient are 1. In this example, the Pearson product-moment correlation coefficient is 0.7544, indicating that the points are far from lying on a straight line.

In the same way, if

always decreases when

increases, the rank correlation coefficients will be

while the Pearson product-moment correlation coefficient may or may not be close to

. This depends on how close the points are to a straight line. However, in the extreme case of perfect rank correlation, when the two coefficients are both equal (being both

or both

), this is not in general so, and values of the two coefficients cannot meaningfully be compared. For example, for the three pairs

,

,

, Spearman’s coefficient is

, while Kendall’s coefficient is

.

11.2: More About Correlation

11.2.1: Ecological Fallacy

An ecological fallacy is an interpretation of statistical data where inferences about individuals are deduced from inferences about the group as a whole.

Learning Objective

Discuss ecological fallacy in terms of aggregate versus individual inference and give specific examples of its occurrence.

Key Points

- Ecological fallacy can refer to the following fallacy: the average for a group is approximated by the average in the total population divided by the group size.

- A striking ecological fallacy is Simpson’s paradox.

- Another example of ecological fallacy is when the average of a population is assumed to have an interpretation in terms of likelihood at the individual level.

- Aggregate regressions lose individual level data but individual regressions add strong modeling assumptions.

Key Terms

- Simpson’s paradox

-

That the association of two variables for one subset of a population may be similar to the association of those variables in another subset, but different from the association of the variables in the total population.

- ecological correlation

-

A correlation between two variables that are group parameters, in contrast to a correlation between two variables that describe individuals.

Confusion Between Groups and Individuals

Ecological fallacy can refer to the following statistical fallacy: the correlation between individual variables is deduced from the correlation of the variables collected for the group to which those individuals belong. As an example, assume that at the individual level, being Protestant impacts negatively one’s tendency to commit suicide, but the probability that one’s neighbor commits suicide increases one’s tendency to become Protestant. Then, even if at the individual level there is negative correlation between suicidal tendencies and Protestantism, there can be a positive correlation at the aggregate level.

Choosing Between Aggregate and Individual Inference

Running regressions on aggregate data is not unacceptable if one is interested in the aggregate model. For instance, as a governor, it is correct to make inferences about the effect the size of a police force would have on the crime rate at the state level, if one is interested in the policy implication of a rise in police force. However, an ecological fallacy would happen if a city council deduces the impact of an increase in the police force on the crime rate at the city level from the correlation at the state level.

Choosing to run aggregate or individual regressions to understand aggregate impacts on some policy depends on the following trade off: aggregate regressions lose individual level data but individual regressions add strong modeling assumptions.

Some researchers suggest that the ecological correlation gives a better picture of the outcome of public policy actions, thus they recommend the ecological correlation over the individual level correlation for this purpose. Other researchers disagree, especially when the relationships among the levels are not clearly modeled. To prevent ecological fallacy, researchers with no individual data can model first what is occurring at the individual level, then model how the individual and group levels are related, and finally examine whether anything occurring at the group level adds to the understanding of the relationship.

Groups and Total Averages

Ecological fallacy can also refer to the following fallacy: the average for a group is approximated by the average in the total population divided by the group size. Suppose one knows the number of Protestants and the suicide rate in the USA, but one does not have data linking religion and suicide at the individual level. If one is interested in the suicide rate of Protestants, it is a mistake to estimate it by the total suicide rate divided by the number of Protestants.

Simpson’s Paradox

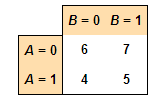

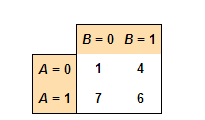

A striking ecological fallacy is Simpson’s paradox, diagramed in . Simpson’s paradox refers to the fact, when comparing two populations divided in groups of different sizes, the average of some variable in the first population can be higher in every group and yet lower in the total population.

Simpson’s Paradox

Simpson’s paradox for continuous data: a positive trend appears for two separate groups (blue and red), a negative trend (black, dashed) appears when the data are combined.

Mean and Median

A third example of ecological fallacy is when the average of a population is assumed to have an interpretation in terms of likelihood at the individual level.

For instance, if the average score of group A is larger than zero, it does not mean that a random individual of group A is more likely to have a positive score. Similarly, if a particular group of people is measured to have a lower average IQ than the general population, it is an error to conclude that a randomly selected member of the group is more likely to have a lower IQ than the average general population. Mathematically, this comes from the fact that a distribution can have a positive mean but a negative median. This property is linked to the skewness of the distribution.

Consider the following numerical example:

Group A: 80% of people got 40 points and 20% of them got 95 points. The average score is 51 points.

Group B: 50% of people got 45 points and 50% got 55 points. The average score is 50 points.

If we pick two people at random from A and B, there are 4 possible outcomes:

- A – 40, B – 45 (B wins, 40% probability)

- A – 40, B – 55 (B wins, 40% probability)

- A – 95, B – 45 (A wins, 10% probability)

- A – 95, B – 55 (A wins, 10% probability)

Although Group A has a higher average score, 80% of the time a random individual of A will score lower than a random individual of B.

11.2.2: Correlation is Not Causation

The conventional dictum “correlation does not imply causation” means that correlation cannot be used to infer a causal relationship between variables.

Learning Objective

Recognize that although correlation can indicate the existence of a causal relationship, it is not a sufficient condition to definitively establish such a relationship

Key Points

- The assumption that correlation proves causation is considered a questionable cause logical fallacy, in that two events occurring together are taken to have a cause-and-effect relationship.

- As with any logical fallacy, identifying that the reasoning behind an argument is flawed does not imply that the resulting conclusion is false.

- In the cum hoc ergo propter hoc logical fallacy, one makes a premature conclusion about causality after observing only a correlation between two or more factors.

Key Terms

- convergent cross mapping

-

A statistical test that (like the Granger Causality test) tests whether one variable predicts another; unlike most other tests that establish a coefficient of correlation, but not a cause-and-effect relationship.

- Granger causality test

-

A statistical hypothesis test for determining whether one time series is useful in forecasting another.

- tautology

-

A statement that is true for all values of its variables.

The conventional dictum that “correlation does not imply causation” means that correlation cannot be used to infer a causal relationship between the variables. This dictum does not imply that correlations cannot indicate the potential existence of causal relations. However, the causes underlying the correlation, if any, may be indirect and unknown, and high correlations also overlap with identity relations (tautology) where no causal process exists. Consequently, establishing a correlation between two variables is not a sufficient condition to establish a causal relationship (in either direction). Many statistical tests calculate correlation between variables. A few go further and calculate the likelihood of a true causal relationship. Examples include the Granger causality test and convergent cross mapping.

The assumption that correlation proves causation is considered a “questionable cause logical fallacy,” in that two events occurring together are taken to have a cause-and-effect relationship. This fallacy is also known as cum hoc ergo propter hoc, Latin for “with this, therefore because of this,” and “false cause. ” Consider the following:

In a widely studied example, numerous epidemiological studies showed that women who were taking combined hormone replacement therapy (HRT) also had a lower-than-average incidence of coronary heart disease (CHD), leading doctors to propose that HRT was protective against CHD. But randomized controlled trials showed that HRT caused a small but statistically significant increase in risk of CHD. Re-analysis of the data from the epidemiological studies showed that women undertaking HRT were more likely to be from higher socio-economic groups with better-than-average diet and exercise regimens. The use of HRT and decreased incidence of coronary heart disease were coincident effects of a common cause (i.e. the benefits associated with a higher socioeconomic status), rather than cause and effect, as had been supposed.

As with any logical fallacy, identifying that the reasoning behind an argument is flawed does not imply that the resulting conclusion is false. In the instance above, if the trials had found that hormone replacement therapy caused a decrease in coronary heart disease, but not to the degree suggested by the epidemiological studies, the assumption of causality would have been correct, although the logic behind the assumption would still have been flawed.

General Pattern

For any two correlated events A and B, the following relationships are possible:

- A causes B;

- B causes A;

- A and B are consequences of a common cause, but do not cause each other;

- There is no connection between A and B; the correlation is coincidental.

Less clear-cut correlations are also possible. For example, causality is not necessarily one-way; in a predator-prey relationship, predator numbers affect prey, but prey numbers (e.g., food supply) also affect predators.

The cum hoc ergo propter hoc logical fallacy can be expressed as follows:

- A occurs in correlation with B.

- Therefore, A causes B.

In this type of logical fallacy, one makes a premature conclusion about causality after observing only a correlation between two or more factors. Generally, if one factor (A) is observed to only be correlated with another factor (B), it is sometimes taken for granted that A is causing B, even when no evidence supports it. This is a logical fallacy because there are at least five possibilities:

- A may be the cause of B.

- B may be the cause of A.

- Some unknown third factor C may actually be the cause of both A and B.

- There may be a combination of the above three relationships. For example, B may be the cause of A at the same time as A is the cause of B (contradicting that the only relationship between A and B is that A causes B). This describes a self-reinforcing system.

- The “relationship” is a coincidence or so complex or indirect that it is more effectively called a coincidence (i.e., two events occurring at the same time that have no direct relationship to each other besides the fact that they are occurring at the same time). A larger sample size helps to reduce the chance of a coincidence, unless there is a systematic error in the experiment.

In other words, there can be no conclusion made regarding the existence or the direction of a cause and effect relationship only from the fact that A and B are correlated. Determining whether there is an actual cause and effect relationship requires further investigation, even when the relationship between A and B is statistically significant, a large effect size is observed, or a large part of the variance is explained.

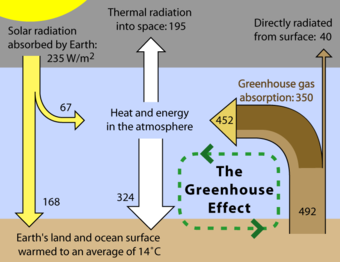

Greenhouse Effect

The greenhouse effect is a well-known cause-and-effect relationship. While well-established, this relationship is still susceptible to logical fallacy due to the complexity of the system.

11.3: Regression

11.3.1: Predictions and Probabilistic Models

Regression models are often used to predict a response variable

from an explanatory variable

.

Learning Objective

Explain how to estimate the relationship among variables using regression analysis

Key Points

- Regression models predict a value of the

variable, given known values of the

variables. Prediction within the range of values in the data set used for model-fitting is known informally as interpolation. - Prediction outside this range of the data is known as extrapolation. The further the extrapolation goes outside the data, the more room there is for the model to fail due to differences between the assumptions and the sample data or the true values.

- There are certain necessary conditions for regression inference: observations must be independent, the mean response has a straight-line relationship with

, the standard deviation of

is the same for all values of

, and the response

varies according to a normal distribution.

Key Terms

- interpolation

-

the process of estimating the value of a function at a point from its values at nearby points

- extrapolation

-

a calculation of an estimate of the value of some function outside the range of known values

Regression Analysis

In statistics, regression analysis is a statistical technique for estimating the relationships among variables. It includes many techniques for modeling and analyzing several variables when the focus is on the relationship between a dependent variable and one or more independent variables. More specifically, regression analysis helps one understand how the typical value of the dependent variable changes when any one of the independent variables is varied, while the other independent variables are held fixed. Most commonly, regression analysis estimates the conditional expectation of the dependent variable given the independent variables – that is, the average value of the dependent variable when the independent variables are fixed. Less commonly, the focus is on a quantile, or other location parameter of the conditional distribution of the dependent variable given the independent variables. In all cases, the estimation target is a function of the independent variables, called the regression function. In regression analysis, it is also of interest to characterize the variation of the dependent variable around the regression function, which can be described by a probability distribution.

Regression analysis is widely used for prediction and forecasting. Regression analysis is also used to understand which among the independent variables is related to the dependent variable, and to explore the forms of these relationships. In restricted circumstances, regression analysis can be used to infer causal relationships between the independent and dependent variables. However this can lead to illusions or false relationships, so caution is advisable; for example, correlation does not imply causation.

Making Predictions Using Regression Inference

Regression models predict a value of the

variable, given known values of the

variables. Prediction within the range of values in the data set used for model-fitting is known informally as interpolation. Prediction outside this range of the data is known as extrapolation. Performing extrapolation relies strongly on the regression assumptions. The further the extrapolation goes outside the data, the more room there is for the model to fail due to differences between the assumptions and the sample data or the true values.

It is generally advised that when performing extrapolation, one should accompany the estimated value of the dependent variable with a prediction interval that represents the uncertainty. Such intervals tend to expand rapidly as the values of the independent variable(s) move outside the range covered by the observed data.

However, this does not cover the full set of modelling errors that may be being made–in particular, the assumption of a particular form for the relation between

and

. A properly conducted regression analysis will include an assessment of how well the assumed form is matched by the observed data, but it can only do so within the range of values of the independent variables actually available. This means that any extrapolation is particularly reliant on the assumptions being made about the structural form of the regression relationship. Best-practice advice here is that a linear-in-variables and linear-in-parameters relationship should not be chosen simply for computational convenience, but that all available knowledge should be deployed in constructing a regression model. If this knowledge includes the fact that the dependent variable cannot go outside a certain range of values, this can be made use of in selecting the model – even if the observed data set has no values particularly near such bounds. The implications of this step of choosing an appropriate functional form for the regression can be great when extrapolation is considered. At a minimum, it can ensure that any extrapolation arising from a fitted model is “realistic” (or in accord with what is known).

Conditions for Regression Inference

A scatterplot shows a linear relationship between a quantitative explanatory variable

and a quantitative response variable

. Let’s say we have

observations on an explanatory variable

and a response variable

. Our goal is to study or predict the behavior of

for given values of

. Here are the required conditions for the regression model:

- Repeated responses

are independent of each other. - The mean response

has a straight-line (i.e., “linear”) relationship with

:

; the slope

and intercept

are unknown parameters. - The standard deviation of

(call it

) is the same for all values of

. The value of

is unknown. - For any fixed value of

, the response

varies according to a normal distribution.

The importance of data distribution in linear regression inference

A good rule of thumb when using the linear regression method is to look at the scatter plot of the data. This graph is a visual example of why it is important that the data have a linear relationship. Each of these four data sets has the same linear regression line and therefore the same correlation, 0.816. This number may at first seem like a strong correlation—but in reality the four data distributions are very different: the same predictions that might be true for the first data set would likely not be true for the second, even though the regression method would lead you to believe that they were more or less the same. Looking at panels 2, 3, and 4, you can see that a straight line is probably not the best way to represent these three data sets.

11.3.2: A Graph of Averages

A graph of averages and the least-square regression line are both good ways to summarize the data in a scatterplot.

Learning Objective

Contrast linear regression and graph of averages

Key Points

- In most cases, a line will not pass through all points in the data. A good line of regression makes the distances from the points to the line as small as possible. The most common method of doing this is called the “least-squares” method.

- Sometimes, a graph of averages is used to show a pattern between the

and

variables. In a graph of averages, the

-axis is divided up into intervals. The averages of the

values in those intervals are plotted against the midpoints of the intervals. - The graph of averages plots a typical

value in each interval: some of the points fall above the least-squares regression line, and some of the points fall below that line.

Key Terms

- interpolation

-

the process of estimating the value of a function at a point from its values at nearby points

- extrapolation

-

a calculation of an estimate of the value of some function outside the range of known values

- graph of averages

-

a plot of the average values of one variable (say

$y$ ) for small ranges of values of the other variable (say$x$ ), against the value of the second variable ($x$ ) at the midpoints of the ranges

Linear Regression vs. Graph of Averages

Linear (straight-line) relationships between two quantitative variables are very common in statistics. Often, when we have a scatterplot that shows a linear relationship, we’d like to summarize the overall pattern and make predictions about the data. This can be done by drawing a line through the scatterplot. The regression line drawn through the points describes how the dependent variable

changes with the independent variable

. The line is a model that can be used to make predictions, whether it is interpolation or extrapolation. The regression line has the form

, where

is the dependent variable,

is the independent variable,

is the slope (the amount by which

changes when

increases by one), and

is the

-intercept (the value of

when

).

In most cases, a line will not pass through all points in the data. A good line of regression makes the distances from the points to the line as small as possible. The most common method of doing this is called the “least-squares” method. The least-squares regression line is of the form

, with slope

(

is the correlation coefficient,

and

are the standard deviations of

and

). This line passes through the point

(the means of

and

).

Sometimes, a graph of averages is used to show a pattern between the

and

variables. In a graph of averages, the

-axis is divided up into intervals. The averages of the

values in those intervals are plotted against the midpoints of the intervals. If we needed to summarize the

values whose

values fall in a certain interval, the point plotted on the graph of averages would be good to use.

The points on a graph of averages do not usually line up in a straight line, making it different from the least-squares regression line. The graph of averages plots a typical

value in each interval: some of the points fall above the least-squares regression line, and some of the points fall below that line.

Least Squares Regression Line

Random data points and their linear regression.

11.3.3: The Regression Method

The regression method utilizes the average from known data to make predictions about new data.

Learning Objective

Contrast interpolation and extrapolation to predict data

Key Points

- If we know no information about the

-value, it is best to make predictions about the

-value using the average of the entire data set. - If we know the independent variable, or

-value, the best prediction of the dependent variable, or

-value, is the average of all the

-values for that specific

-value. - Generalizations and predictions are often made using the methods of interpolation and extrapolation.

Key Terms

- extrapolation

-

a calculation of an estimate of the value of some function outside the range of known values

- interpolation

-

the process of estimating the value of a function at a point from its values at nearby points

The Regression Method

The best way to understand the regression method is to use an example. Let’s say we have some data about students’ Math SAT scores and their freshman year GPAs in college. The average SAT score is 560, with a standard deviation of 75. The average first year GPA is 2.8, with a standard deviation of 0.5. Now, we choose a student at random and wish to predict his first year GPA. With no other information given, it is best to predict using the average. We predict his GPA is 2.8

Now, let’s say we pick another student. However, this time we know her Math SAT score was 680, which is significantly higher than the average. Instead of just predicting 2.8, this time we look at the graph of averages and predict her GPA is whatever the average is of all the students in our sample who also scored a 680 on the SAT. This is likely to be higher than 2.8.

To generalize the regression method:

- If you know no information (you don’t know the SAT score), it is best to make predictions using the average.

- If you know the independent variable, or

-value (you know the SAT score), the best prediction of the dependent variable, or

-value (in this case, the GPA), is the average of all the

-values for that specific

-value.

Generalization

In the example above, the college only has experience with students that have been admitted; however, it could also use the regression model for students that have not been admitted. There are some problems with this type of generalization. If the students admitted all had SAT scores within the range of 480 to 780, the regression model may not be a very good estimate for a student who only scored a 350 on the SAT.

Despite this issue, generalization is used quite often in statistics. Sometimes statisticians will use interpolation to predict data points within the range of known data points. For example, if no one before had received an exact SAT score of 650, we would predict his GPA by looking at the GPAs of those who scored 640 and 660 on the SAT.

Extrapolation is also frequently used, in which data points beyond the known range of values is predicted. Let’s say the highest SAT score of a student the college admitted was 780. What if we have a student with an SAT score of 800, and we want to predict her GPA? We can do this by extending the regression line. This may or may not be accurate, depending on the subject matter.

Extrapolation

An example of extrapolation, where data outside the known range of values is predicted. The red points are assumed known and the extrapolation problem consists of giving a meaningful value to the blue box at

.

11.3.4: The Regression Fallacy

The regression fallacy fails to account for natural fluctuations and rather ascribes cause where none exists.

Learning Objective

Illustrate examples of regression fallacy

Key Points

- Things such as golf scores, the earth’s temperature, and chronic back pain fluctuate naturally and usually regress towards the mean. The logical flaw is to make predictions that expect exceptional results to continue as if they were average.

- People are most likely to take action when variance is at its peak. Then, after results become more normal, they believe that their action was the cause of the change, when in fact, it was not causal.

- In essence, misapplication of regression to the mean can reduce all events to a “just so” story, without cause or effect. Such misapplication takes as a premise that all events are random, as they must be for the concept of regression to the mean to be validly applied.

Key Terms

- regression fallacy

-

flawed logic that ascribes cause where none exists

- post hoc fallacy

-

flawed logic that assumes just because A occurred before B, then A must have caused B to happen

What is the Regression Fallacy?

The regression (or regressive) fallacy is an informal fallacy. It ascribes cause where none exists. The flaw is failing to account for natural fluctuations. It is frequently a special kind of the post hoc fallacy.

Things such as golf scores, the earth’s temperature, and chronic back pain fluctuate naturally and usually regress towards the mean. The logical flaw is to make predictions that expect exceptional results to continue as if they were average. People are most likely to take action when variance is at its peak. Then, after results become more normal, they believe that their action was the cause of the change, when in fact, it was not causal.

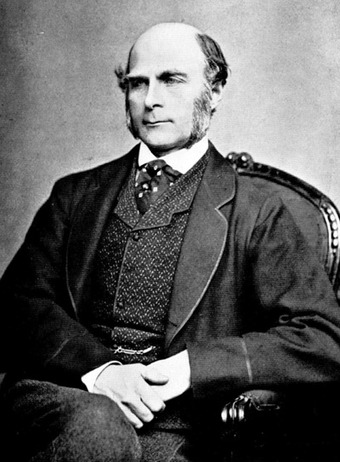

This use of the word “regression” was coined by Sir Francis Galton in a study from 1885 called “Regression Toward Mediocrity in Hereditary Stature. ” He showed that the height of children from very short or very tall parents would move towards the average. In fact, in any situation where two variables are less than perfectly correlated, an exceptional score on one variable may not be matched by an equally exceptional score on the other variable. The imperfect correlation between parents and children (height is not entirely heritable) means that the distribution of heights of their children will be centered somewhere between the average of the parents and the average of the population as whole. Thus, any single child can be more extreme than the parents, but the odds are against it.

Francis Galton

A picture of Sir Francis Galton, who coined the use of the word “regression. “

Examples of the Regression Fallacy

- When his pain got worse, he went to a doctor, after which the pain subsided a little. Therefore, he benefited from the doctor’s treatment.The pain subsiding a little after it has gotten worse is more easily explained by regression towards the mean. Assuming the pain relief was caused by the doctor is fallacious.

- The student did exceptionally poorly last semester, so I punished him. He did much better this semester. Clearly, punishment is effective in improving students’ grades. Often, exceptional performances are followed by more normal performances, so the change in performance might better be explained by regression towards the mean. Incidentally, some experiments have shown that people may develop a systematic bias for punishment and against reward because of reasoning analogous to this example of the regression fallacy.

- The frequency of accidents on a road fell after a speed camera was installed. Therefore, the speed camera has improved road safety. Speed cameras are often installed after a road incurs an exceptionally high number of accidents, and this value usually falls (regression to mean) immediately afterwards. Many speed camera proponents attribute this fall in accidents to the speed camera, without observing the overall trend.

- Some authors have claimed that the alleged “Sports Illustrated Cover Jinx” is a good example of a regression effect: extremely good performances are likely to be followed by less extreme ones, and athletes are chosen to appear on the cover of Sports Illustrated only after extreme performances. Assuming athletic careers are partly based on random factors, attributing this to a “jinx” rather than regression, as some athletes reportedly believed, would be an example of committing the regression fallacy.

Misapplication of the Regression Fallacy

On the other hand, dismissing valid explanations can lead to a worse situation. For example: After the Western Allies invaded Normandy, creating a second major front, German control of Europe waned. Clearly, the combination of the Western Allies and the USSR drove the Germans back.

The conclusion above is true, but what if instead we came to a fallacious evaluation: “Given that the counterattacks against Germany occurred only after they had conquered the greatest amount of territory under their control, regression to the mean can explain the retreat of German forces from occupied territories as a purely random fluctuation that would have happened without any intervention on the part of the USSR or the Western Allies.” This is clearly not the case. The reason is that political power and occupation of territories is not primarily determined by random events, making the concept of regression to the mean inapplicable (on the large scale).

In essence, misapplication of regression to the mean can reduce all events to a “just so” story, without cause or effect. Such misapplication takes as a premise that all events are random, as they must be for the concept of regression to the mean to be validly applied.

11.4: The Regression Line

11.4.1: Slope and Intercept

In the regression line equation the constant

is the slope of the line and

is the

-intercept.

Learning Objective

Model the relationship between variables in regression analysis

Key Points

- Linear regression is an approach to modeling the relationship between a dependent variable

and 1 or more independent variables denoted

. - The mathematical function of the regression line is expressed in terms of a number of parameters, which are the coefficients of the equation, and the values of the independent variable.

- The coefficients are numeric constants by which variable values in the equation are multiplied or which are added to a variable value to determine the unknown.

- In the regression line equation,

and

are the variables of interest in our data, with

the unknown or dependent variable and

the known or independent variable.

Key Terms

- slope

-

the ratio of the vertical and horizontal distances between two points on a line; zero if the line is horizontal, undefined if it is vertical.

- intercept

-

the coordinate of the point at which a curve intersects an axis

Regression Analysis

Regression analysis is the process of building a model of the relationship between variables in the form of mathematical equations. The general purpose is to explain how one variable, the dependent variable, is systematically related to the values of one or more independent variables. An independent variable is so called because we imagine its value varying freely across its range, while the dependent variable is dependent upon the values taken by the independent. The mathematical function is expressed in terms of a number of parameters that are the coefficients of the equation, and the values of the independent variable. The coefficients are numeric constants by which variable values in the equation are multiplied or which are added to a variable value to determine the unknown. A simple example is the equation for the regression line which follows:

Here, by convention,

and

are the variables of interest in our data, with

the unknown or dependent variable and

the known or independent variable. The constant

is slope of the line and

is the

-intercept — the value where the line cross the

axis. So,

and

are the coefficients of the equation.

Linear regression is an approach to modeling the relationship between a scalar dependent variable

and one or more explanatory (independent) variables denoted

. The case of one explanatory variable is called simple linear regression. For more than one explanatory variable, it is called multiple linear regression. (This term should be distinguished from multivariate linear regression, where multiple correlated dependent variables are predicted, rather than a single scalar variable).

11.4.2: Two Regression Lines

ANCOVA can be used to compare regression lines by testing the effect of a categorial value on a dependent variable, controlling the continuous covariate.

Learning Objective

Assess ANCOVA for analysis of covariance

Key Points

- Researchers, such as those working in the field of biology, commonly wish to compare regressions and determine causal relationships between two variables.

- Covariance is a measure of how much two variables change together and how strong the relationship is between them.

- ANCOVA evaluates whether population means of a dependent variable (DV) are equal across levels of a categorical independent variable (IV), while statistically controlling for the effects of other continuous variables that are not of primary interest, known as covariates (CV).

- ANCOVA can also be used to increase statistical power or adjust preexisting differences.

- It is also possible to see similar slopes between lines but a different intercept, which can be interpreted as a difference in magnitudes but not in the rate of change.

Key Terms

- statistical power

-

the probability that a statistical test will reject a false null hypothesis, that is, that it will not make a type II error, producing a false negative

- covariance

-

A measure of how much two random variables change together.

Researchers, such as those working in the field of biology, commonly wish to compare regressions and determine causal relationships between two variables. For example, comparing slopes between groups is a method that could be used by a biologist to assess different growth patterns of the development of different genetic factors between groups. Any difference between these factors should result in the presence of differing slopes in the two regression lines.

A method known as analysis of covariance (ANCOVA) can be used to compare two, or more, regression lines by testing the effect of a categorial value on a dependent variable while controlling for the effect of a continuous covariate.

ANCOVA

Covariance is a measure of how much two variables change together and how strong the relationship is between them. Analysis of covariance (ANCOVA) is a general linear model which blends ANOVA and regression. ANCOVA evaluates whether population means of a dependent variable (DV) are equal across levels of a categorical independent variable (IV), while statistically controlling for the effects of other continuous variables that are not of primary interest, known as covariates (CV). Therefore, when performing ANCOVA, we are adjusting the DV means to what they would be if all groups were equal on the CV.

Uses

Increase Power. ANCOVA can be used to increase statistical power (the ability to find a significant difference between groups when one exists) by reducing the within-group error variance.

ANCOVA

This pie chart shows the partitioning of variance within ANCOVA analysis.

In order to understand this, it is necessary to understand the test used to evaluate differences between groups, the

-test. The

-test is computed by dividing the explained variance between groups (e.g., gender difference) by the unexplained variance within the groups. Thus: